Witcher 3: Wild Hunt - AMD GPU Shoot-Out

With the release of The Witcher 3: Wild Hunt, I wanted to take a look at how how the game runs on a variety of GPUs and display configurations, and quality settings. I wanted to see what frame rates you could expect with each combination of GPU, display configuration and quality setting. This would allow me to help gamers figure out what GPU they might want to upgrade to, and/or see how a display upgrade would effect their performance.

With the release of the AMD Catalyst 15.5 Beta driver, I was finally able to do that. I spent a few weeks testing all the different iterations, but publishing of this article took a pause as I dealt with the arrival of the latest shipment of WSGF Stands (and filling those orders).

Back at the beginning of 2016, Club 3D reached out to the WSGF about reviewing some of their products. We previously took a look at their USB 3.0 4k UHD Graphics Adapter, and their MST Hubs (video review and article). They also provided us with a number of video cards for benchmarking and testing - R9 295x2, R9 290X royalAce, and the R9 285 royalQueen. This helped fill out our GPU stack, as we already had a R9 290 and R9 280X on hand.

Testing Notes

Test Rig

The system used an AMD FX-9590 with closed loop water cool, 16GB RAM, and a Crucial M4 512MB SSD. I tested in late May right after the 15.5 Beta came out. It's taken me a while to get around to doing the graphs and the article, due to the "Standpocalypse". In the mean time the 15.7 WHQL drivers have been released which rolled in the Witcher 3 CFX support and performance improvements.

I did see a further slight improvement when I did some spot checking with the 15.7 WHQL driver and the R9 295X2, but nothing to make me retest. The averages were a couple of fps higher, but I did see some more noticeable differences with the Min and Max. Each was a number of fps (maybe 3-5) closer to the average. In the end, the average is close to the same, but with less variance, and possibly some improvements in frame pacing. I reached out to AMD, and they confirmed that there were no additional improvements in the 15.7 driver specific to The Witcher 3. Any improvements would be the result of general DX11 optimizations in the new driver.

Testing Methodology

The test run was a circuit in the first village - starting from the signpost, to the notice board, around to the river, across, the river, and following the path back to the signpost.

At each quality setting, I would do one complete circuit to ensure that the assets were loaded. Without this there were significant frame rate drops at the start. After a few tests, I would reload the save and repeat. This was needed as time would pass and the lighting conditions would change significantly.

The quality settings were as follows: Low/Low, Med/Med, High/High, High/Ultra. The first setting is the post-processing, and the second are the primary graphic settings. I did follow the AMD guide for optimizing The Witcher 3 for AMD Radeon cards.

Charts & Graphs

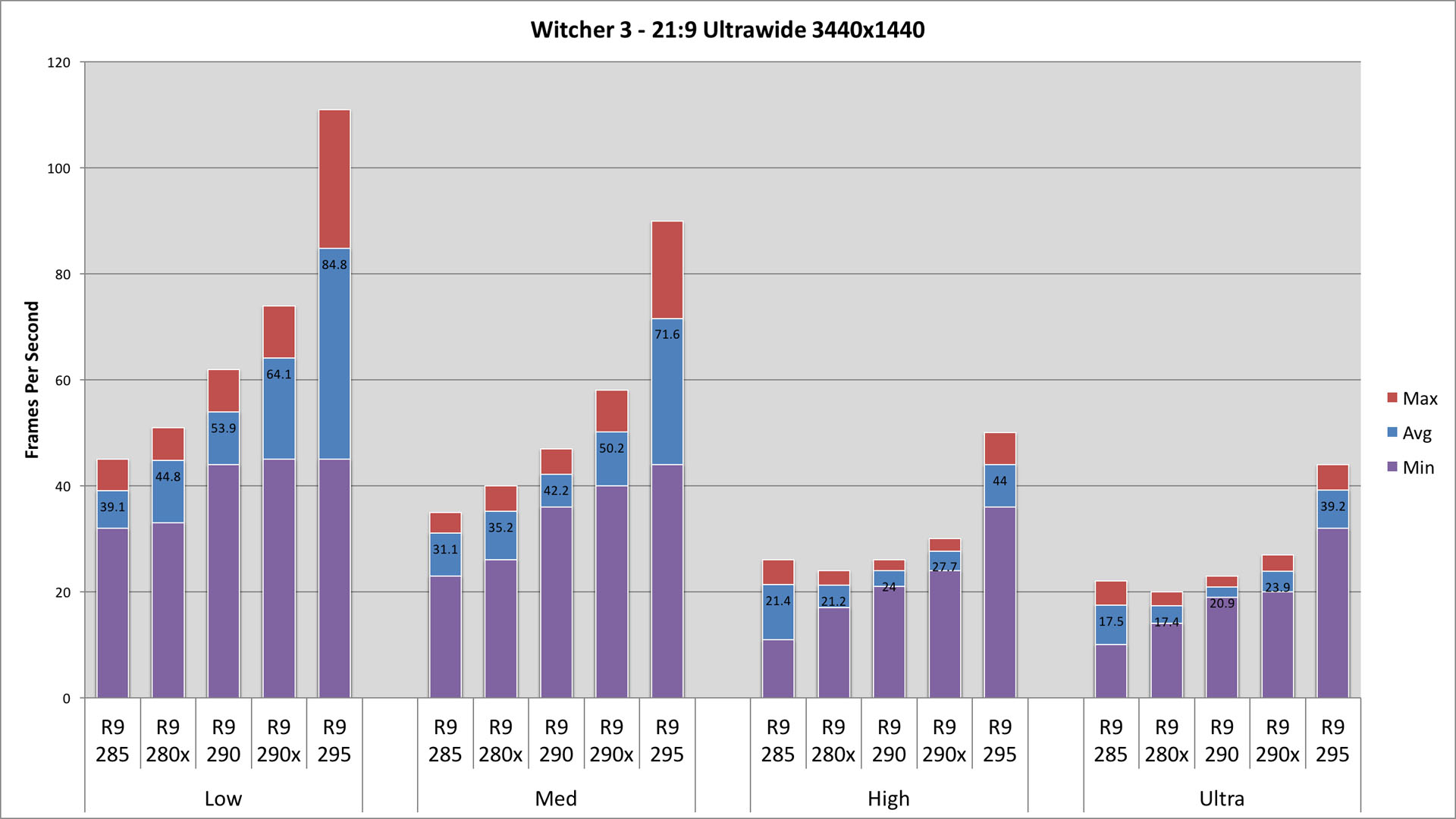

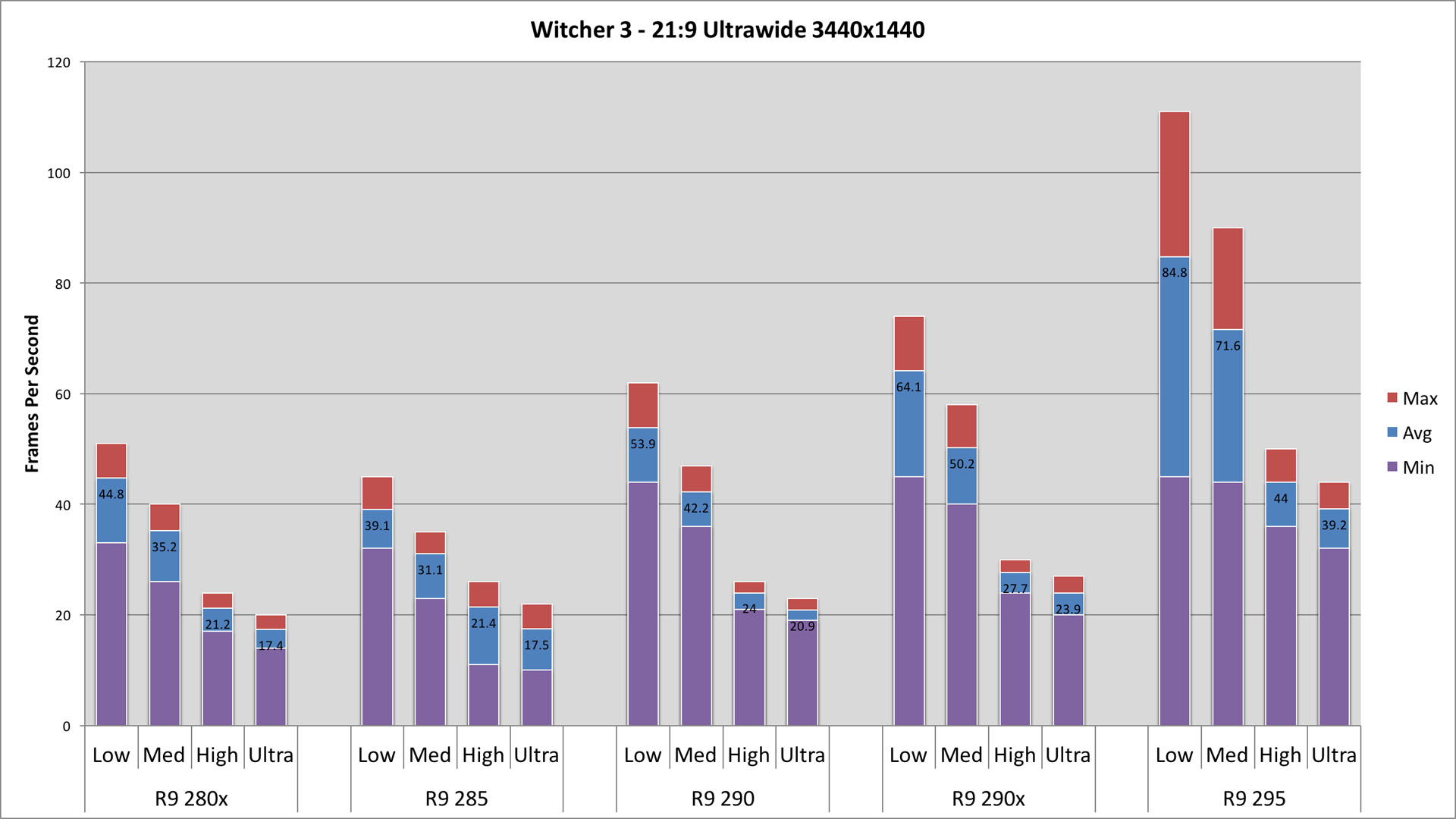

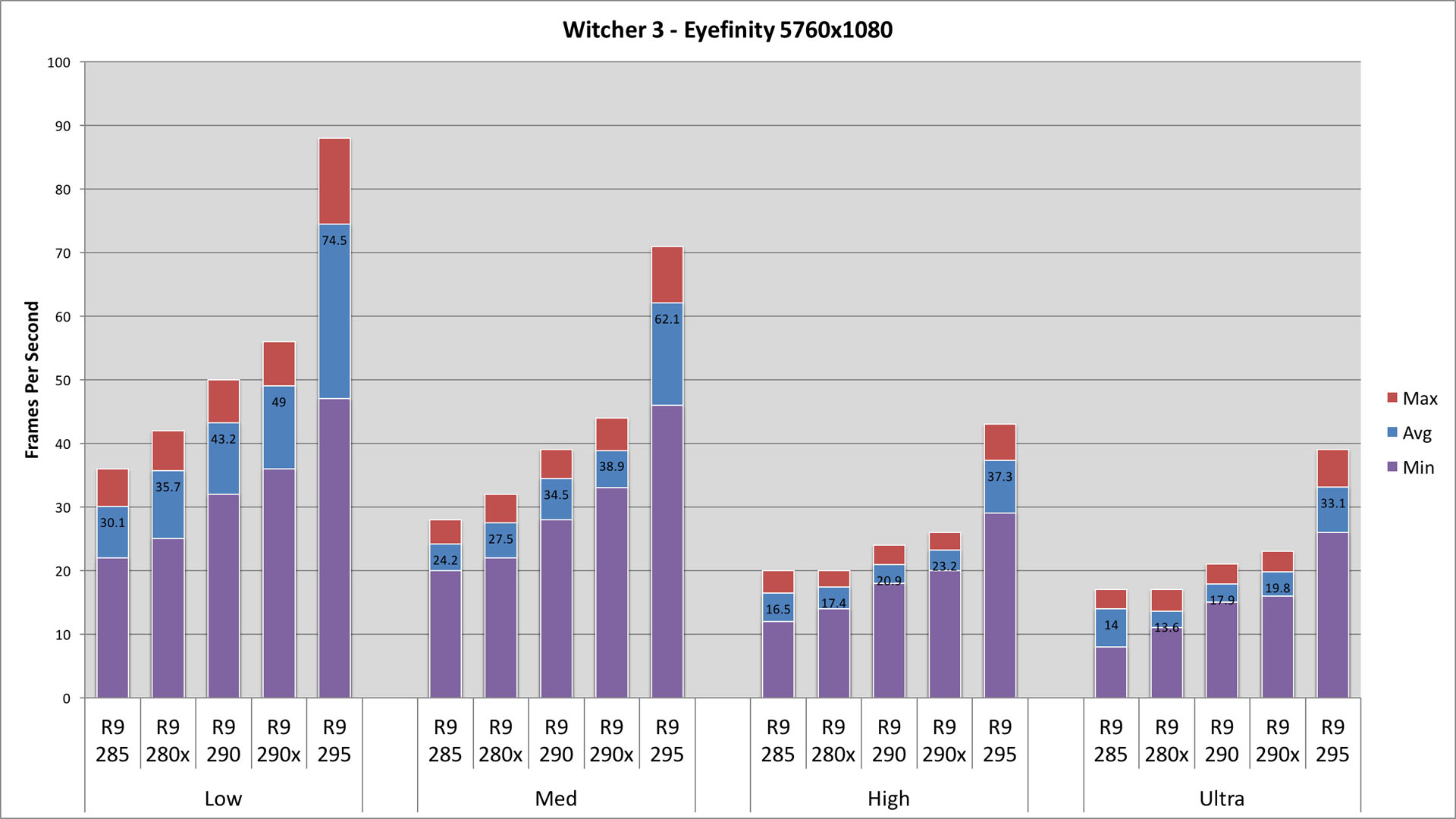

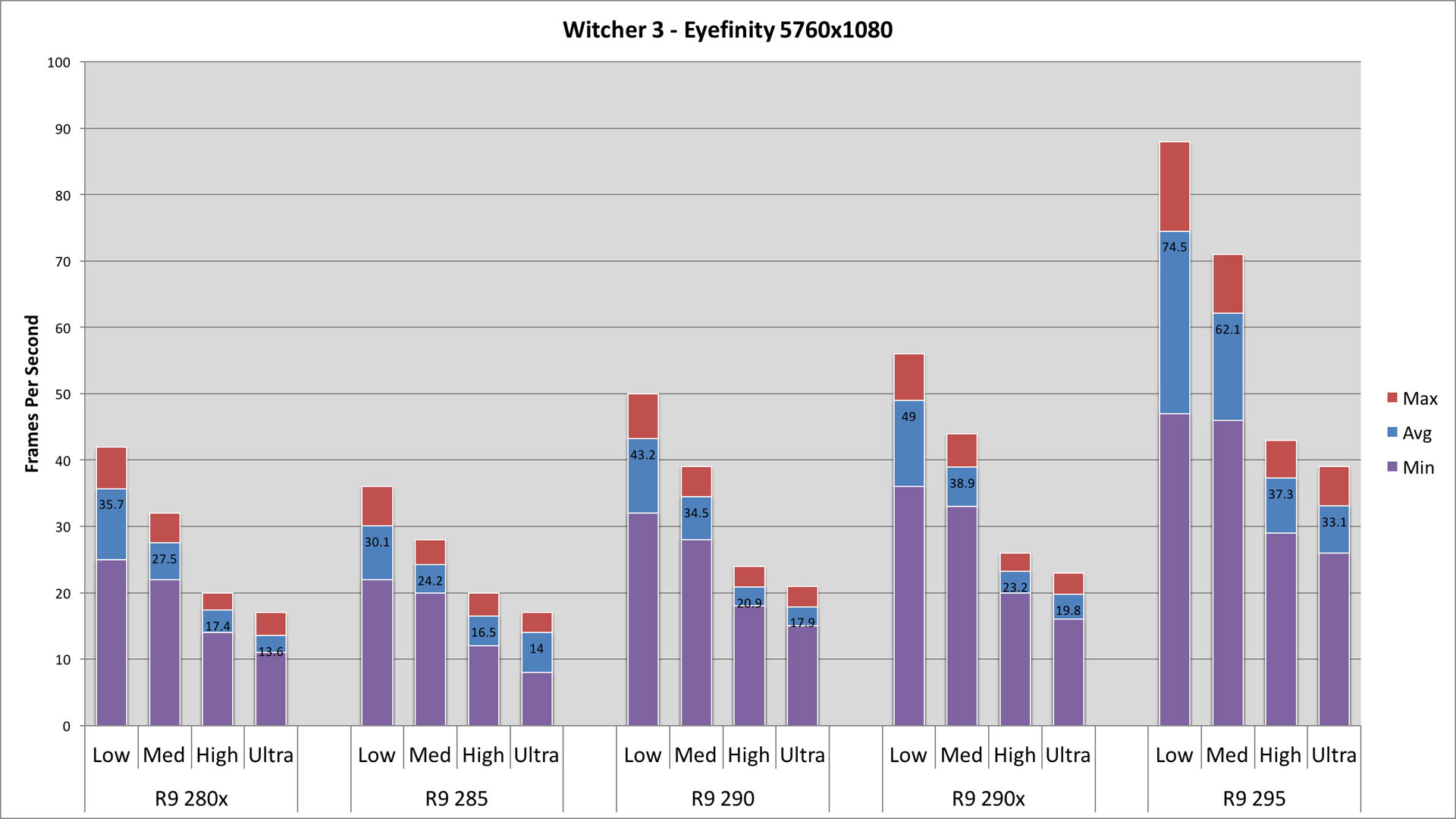

In each testing section you will find a table with fps for Max, Avg and Min. You will also find two charts. The first groups the quality settings together, so that you can compare cards at each setting. The second graph groups by card, so that you can see how the graphic settings compare for each card. The average fps value is on each graph as well.

21:9 Ultra-Wide 3440x1440

| Low | Med | High | Ultra | |||||||||||||

| Max | Avg | Min | Max | Avg | Min | Max | Avg | Min | Max | Avg | Min | |||||

| R9 285 | 45 | 39.1 | 32 | 35 | 31.1 | 23 | 26 | 21.4 | 11 | 22 | 17.5 | 10 | ||||

| R9 280x | 51 | 44.8 | 33 | 40 | 35.2 | 26 | 24 | 21.2 | 17 | 20 | 17.4 | 14 | ||||

| R9 290 | 62 | 53.9 | 44 | 47 | 42.2 | 36 | 26 | 24 | 21 | 23 | 20.9 | 19 | ||||

| R9 290x | 74 | 64.1 | 45 | 58 | 50.2 | 40 | 30 | 27.7 | 24 | 27 | 23.9 | 20 | ||||

| R9 295 | 111 | 84.8 | 45 | 90 | 71.6 | 44 | 50 | 44 | 36 | 44 | 39.2 | 32 | ||||

Ultra-Wide 3440x1440 is our "low end" of testing. Some sites till test 1920x1080, or 2560x1440, but not here at the WSGF. This configuration - our least demanding - still has 4.95M pixels. This is 2.3x the pixels of 1080p, and only 20% less than 3x1080p Eyefinity or Surround.

This is a tough resolution to start with, and low end cards will feel the pinch. At Low settings (which I don't recommend anyone use), minimum frame rates stay above 30fps - even the R9 285. With the R9 290X, we see average fps crack the magical 60 mark. Given the nature of an RPG (and my own personal preference), I find that a consistent 40-50 fps is very playable.

On Medium settings, all cards hold an average above 30fps, with the R9 290 and above holding minimums above 30fps. In reality, the performance hit for Medium is not that much, while the graphical improvements are significant - particularly textures and draw distance.

High settings begins to bring a real challenge to the cards, with only the R9 295x2 hitting average or minimum above 30fps. The shift from High to Ultra is not as noticeable, for either fps or graphical fidelity.

Eyefinity 5760x1080

| Low | Med | High | Ultra | |||||||||||||

| Max | Avg | Min | Max | Avg | Min | Max | Avg | Min | Max | Avg | Min | |||||

| R9 285 | 36 | 30.1 | 22 | 28 | 24.2 | 20 | 20 | 16.5 | 12 | 17 | 14 | 8 | ||||

| R9 280x | 42 | 35.7 | 25 | 32 | 27.5 | 22 | 20 | 17.4 | 14 | 17 | 13.6 | 11 | ||||

| R9 290 | 50 | 43.2 | 32 | 39 | 34.5 | 28 | 24 | 20.9 | 18 | 21 | 17.9 | 15 | ||||

| R9 290x | 56 | 49 | 36 | 44 | 38.9 | 33 | 26 | 23.2 | 20 | 23 | 19.8 | 16 | ||||

| R9 295 | 88 | 74.5 | 47 | 71 | 62.1 | 46 | 43 | 37.3 | 29 | 39 | 33.1 | 26 | ||||

3x 1080p multi-monitor is 75% the pixel count of 4k UHD, but is only 20% more pixels than 3440x1440 Ultra-Wide.

Eyefinity adds 20% more pixels, and performance follows closely with a dip of 18% dip on average. There are a few areas were performance impacts are different:

- The multi-GPU R9 295X2 has performance hits closer to 13%, especially above Low settings

- Low Settings - Minimum frame rates take a hit of 25% for single GPU cards

- High Settings - Performance hits are closer to 15% on average, especially with a card in the 290 family

To play at Medium settings, you need a card in the R9 290 family - the R9 285 and R9 280X just won't cut it. The only card to consider being playable at High settings is the R9 295X2, and even it wouldn't handle serious combat or Ultra settings.

The biggest performance gap continues to be between Medium and High settings.

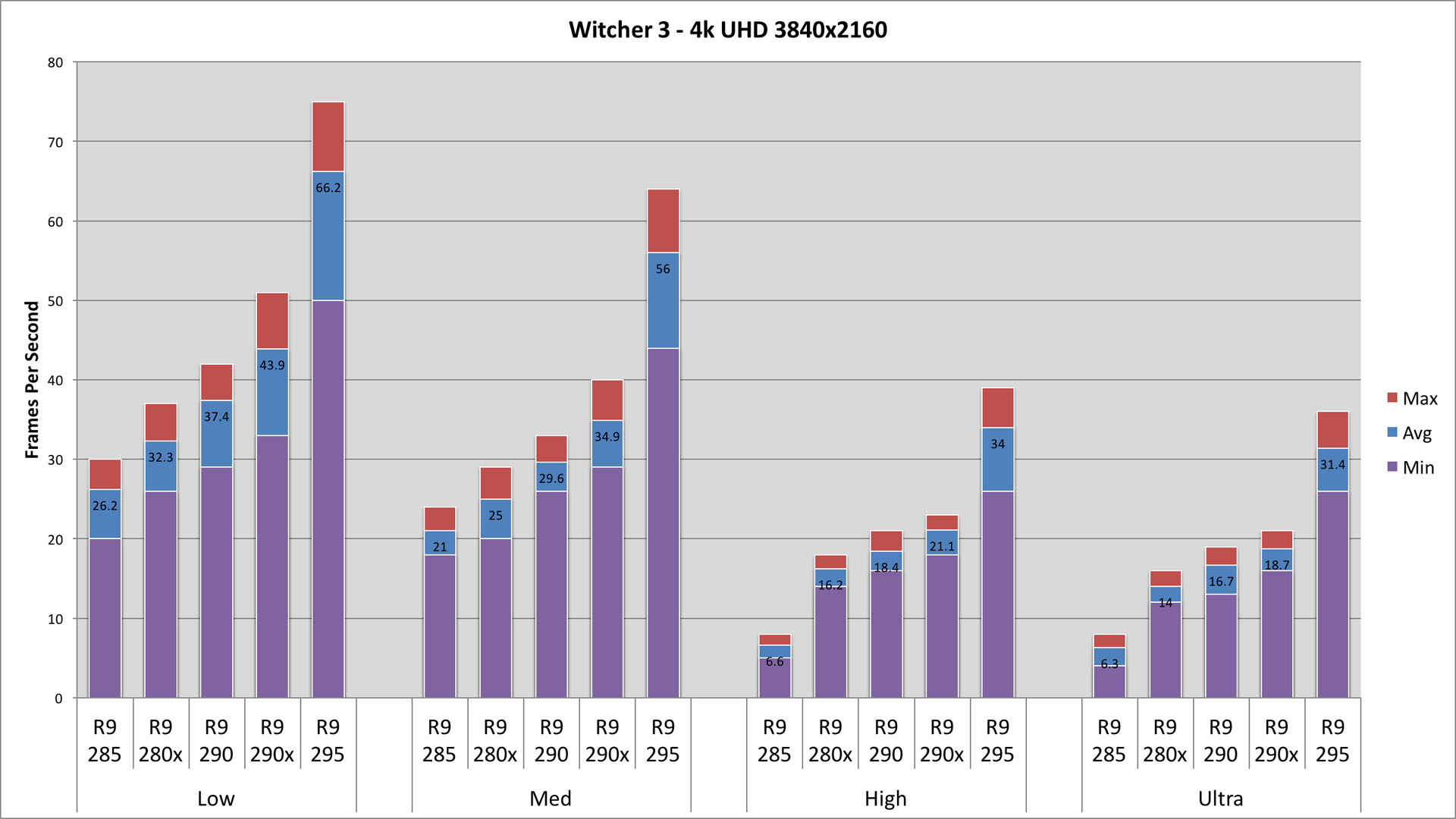

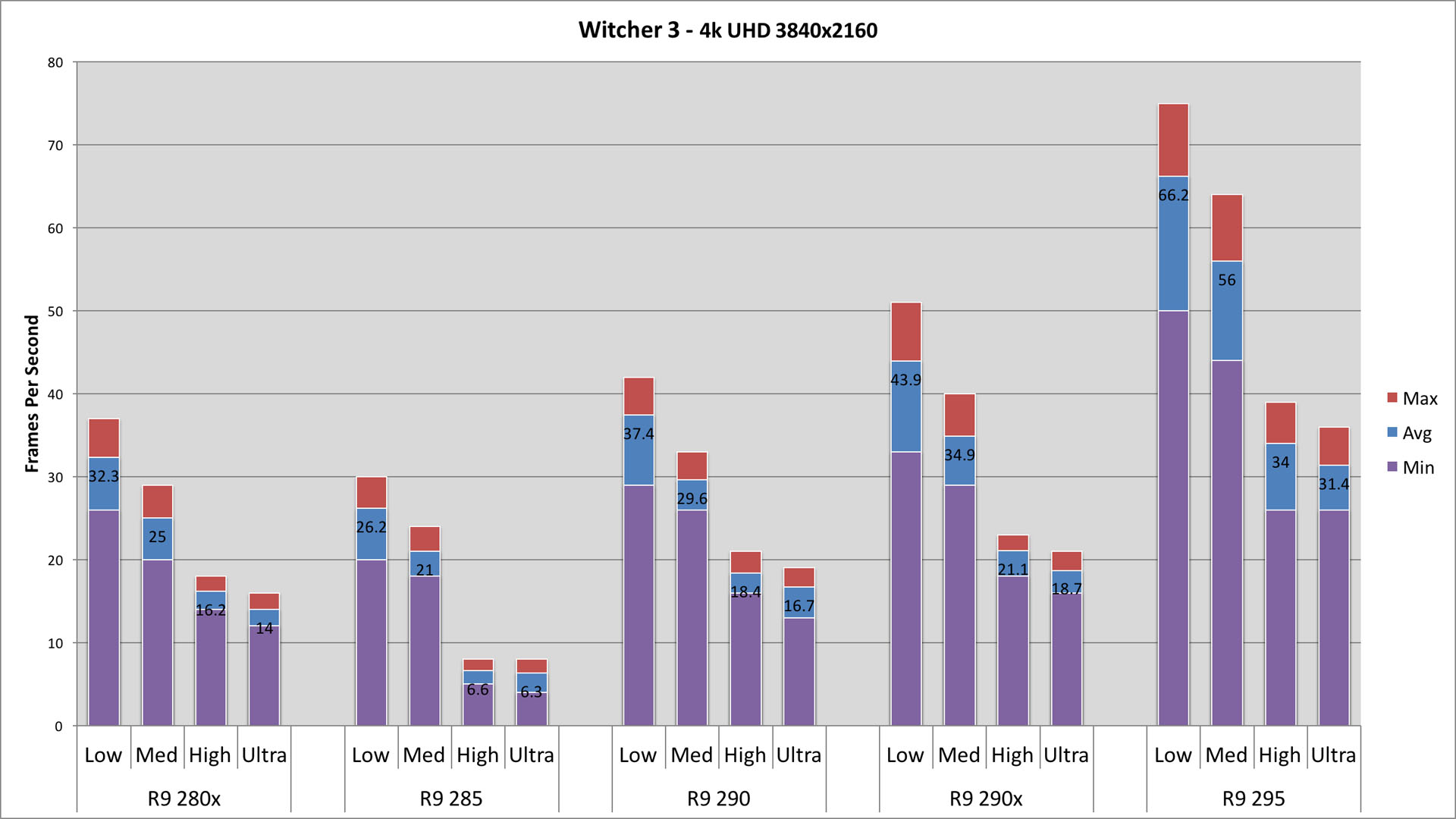

4k UHD 3840x2160

| Low | Med | High | Ultra | |||||||||||||

| Max | Avg | Min | Max | Avg | Min | Max | Avg | Min | Max | Avg | Min | |||||

| R9 285 | 30 | 26.2 | 20 | 24 | 21 | 18 | 8 | 6.6 | 5 | 8 | 6.3 | 4 | ||||

| R9 280x | 37 | 32.3 | 26 | 29 | 25 | 20 | 18 | 16.2 | 14 | 16 | 14 | 12 | ||||

| R9 290 | 42 | 37.4 | 29 | 33 | 29.6 | 26 | 21 | 18.4 | 16 | 19 | 16.7 | 13 | ||||

| R9 290x | 51 | 43.9 | 33 | 40 | 34.9 | 29 | 23 | 21.1 | 18 | 21 | 18.7 | 16 | ||||

| R9 295 | 75 | 66.2 | 50 | 64 | 56 | 44 | 39 | 34 | 26 | 36 | 31.4 | 26 | ||||

4k UHD is 4x 1080p, increasing the pixel count from Eyefinity by 25%. In spite of this 25% increase in pixel count, performance only drops an average of 8%. There are a few exceptions to the rule:

- A number of minimum values stay the same (within a margin of 1-2fps), or actually improve

- The R9 285 sees 50-60% performance drop at High and Ultra, only seeing single digits

An R9 290X or 295X2 is needed to play at Medium settings. Any lesser card cannot deal with the demands of the game, unless you revert to Low settings. But, what is the point of Low settings at 4k UHD? You may be able to manually tweak settings between Medium and High with the R9 295, but even it is challenged with the High presets.

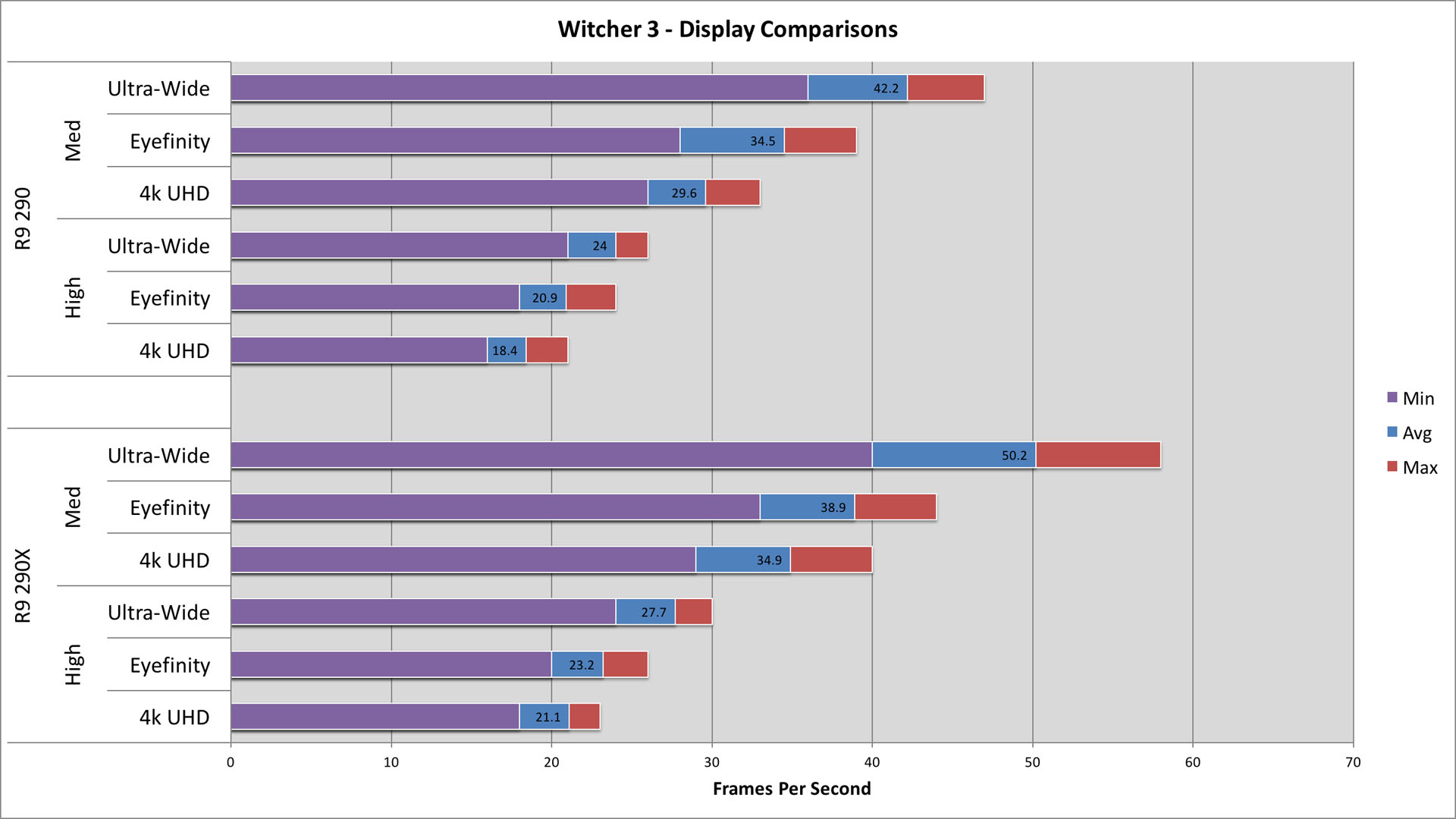

Conclusions & Display Comparisons

| Med | High | |||||||

| Max | Avg | Min | Max | Avg | Min | |||

| Ultra-Wide | R9 290 | 47 | 42.2 | 36 | 26 | 24 | 21 | |

| Eyefinity | R9 290 | 39 | 34.5 | 28 | 24 | 20.9 | 18 | |

| 4k UHD | R9 290 | 33 | 29.6 | 26 | 21 | 18.4 | 16 | |

| Ultra-Wide | R9 290x | 58 | 50.2 | 40 | 30 | 27.7 | 24 | |

| Eyefinity | R9 290x | 44 | 38.9 | 33 | 26 | 23.2 | 20 | |

| 4k UHD | R9 290x | 40 | 34.9 | 29 | 23 | 21.1 | 18 | |

Looking at comparisons between configurations, moving between Eyefinity and 3440x1440 will see a performance shift of about 18% - on par with its 20% change in pixel count. However, moving between Eyefinity and 4k UHD only has an 8% shift in performance, even considering the 25% change in pixel count.

In the graph to the right, I've look at how the different display configurations compare at Medium and High on the R9 290 and R9 290X.

I threw out Low and Ultra for this comparison. Low was due to ugliness, and Ultra was due to the performance hit for very little visual improvement. And, I think most people will settle somewhere between Medium and High.

I threw out the R9 280 series cards for this comparison, as performance in most instances left a lot to be desired. The R9 280 cards will probably work well for single 1080p, or 2560x1080, but they just don't hold up here. And, I tossed the R9 295X2 due to cost and rarity among gamers.

What we're left with is an interesting comparison. The R9 290X brings performance improvements, but they are mostly noticed on Medium settings. Performance delta on High is minimal.

It's also easy to see how the graphical quality settings have a major impact, even over that of resolution. We consistently see higher frame rates with 4k UHD @ Medium, versus 3440x1440 Ultra-Wide @ High.

Saying that I'm a fan of Geralt of Rivia would be an accurate statement (if not an understatement). I read the first book (The Last Wish) when the first game came out. Since then I've waited for each subsequent book to be translated from Polish to English.

Considering the number of years since I read The Last Wish, I've gone back and started re-reading the series in anticipation of the release of The Witcher 3: Wild Hunt. I also picked up the graphic novels and the "universe" book that Dark Horse published. The first two Witcher games are two of the few games I've actually played to completion over the past few years. So yea, I'm a fan.

The Witcher 3: Wild Hunt has not disappointed. And while I haven't been able to put in near as many hours as what I've wanted, I have thoroughly enjoyed my time. The only downside is the demanding system requirements for the game. With the Radeon R9 295x2 and a 3440x1440 Ultra-Wide, I end up playing on Medium settings.

Now I just need to invest some time into tweaking my settings between Medium and High, and get some more time to play the game.